Summary

Audio Summmary

An opinion article in MIT Technology Review examines how AI and advanced algorithms for monitoring worker activities are creating an extreme imbalance in the power dynamics between employers and workers. Privacy groups complain that employees are living in fear of a “ruling algorithm” that compiles performance metrics in project management tools. And as AI agents hit the workplace, one AI company is marketing its agents with taglines like “Artisans won’t complain about work-life balance”.

The number of humanoid robotics companies is rising in China as the government implements an action plan to double the number of robots in 2025 compared to 2020. Many electric vehicle companies are starting to produce robots because the profit margins from EV sales are falling, and they can leverage their existing supply chain infrastructures for the robot components.

On the side of applications, Crunchbase has announced that it is shifting from a data provider platform of startup data to a platform providing AI-based predictions on company growth trajectories, funding round outcomes, company layoffs and acquisitions. Crunchbase management argues that the data provision industry is eventually doomed, and that value can only be provided by creating a niche as data analyzers and predictors. An article in the Conversation presents a study of the use of AI to predict non-contact injuries to players in rugby. The AI model predicts injuries at an 82% accuracy rate in non-contact leg injuries and 75% accuracy for ankle sprains, and can be used by team managers to provide personalized training programs to players.

A TechCrunch article reviews the Claude family of models from Anthropic. These models have become particularly popular for coding and math problems, and image captioning. Like all popular generative AI models, there is a suspicion that Claude models were trained on copyrighted material from the Internet. Nonetheless, Anthropic’s terms of use mentions a clause that purports to defend Claude users in case of litigation. On the issue of copyright, a Member of the European Parliament has criticized the EU Artificial Intelligence Act for leaving a loophole for copyright violations. Drafted before the surge in generative AI, the EU AI Act requires companies to comply with Copyright Law. However, there are exemptions for text and data mining which some believe are being exploited by Big Tech firms to train their models.

Another problem with generative AI is hallucination – generated content that is plausible but incorrect. In the case of software code, the impact of hallucination can be security holes, compliance issues and increased technical debt. An InfoWorld article provides suggestions to developers on controlling code hallucinations.

Elon Musk has announced the launch of Grok-3, the latest version of the xAI chatbot. The first version of Grok was launched two years ago, with Musk marketing it as anti “woke”. Many of the usual guardrails against vulgarity and reproducing images of well-known people are removed. TechCrunch reported that xAI briefly censored Grok-3: when asked “Who is the biggest misinformation spreader?”, the thought process indicated that it was instructed not to mention Elon Musk and Donald Trump.

Finally, Microsoft has announced its own breakthrough in quantum computing by making a chip that contains eight topological quantum bits (qubits). Their qubit modeling uses Majorana particles – a hypothetical particle first proposed ninety years ago that is its own antiparticle. This can be exploited to tell if the total number of electrons in a qubit is odd or even – and this value represents the zero and one of a qubit.

Table of Contents

1. A new Microsoft chip could lead to more stable quantum computers

2. China’s EV giants are betting big on humanoid robots

3. Crunchbase’s AI can predict startup success with 95% accuracy – will it change investing?

4. How to keep AI hallucinations out of your code

5. Elon Musk’s startup rolls out new Grok-3 chatbot as AI competition intensifies

6. EU accused of leaving ‘devastating’ copyright loophole in AI Act

7. How AI can predict rugby injuries before they happen

1. A new Microsoft chip could lead to more stable quantum computers

Microsoft has announced its own breakthrough in quantum computing by making a chip that contains eight topological quantum bits (qubits). Whereas classical electronic computers represent basic information as zeros and ones (the bit), a qubit is a representation from quantum mechanics where an entity can be zero or one, or in some state in-between. The main challenge in building quantum computations is that current hardware introduces errors into quantum computations, which means that several physical qubits are needed to model a single logical qubit. Google and IBM are building their qubits using superconducting materials. Microsoft, in its approach, is seeking to use more stable materials. Its prototype chip uses a thin “nanowire” made from indium arsenide placed in proximity to aluminum, which at temperatures close to absolute zero can create superconductivity in the wire. Qubit modeling uses Majorana particles – a hypothetical particle first proposed ninety years ago that is its own antiparticle. The nanowire offers the theoretical possibility for an electron to hide itself in two halves at each end of the wire – thereby creating stability. It would therefore be possible to tell if the total number of electrons is odd or even – and this value represents the zero and one of a qubit. Microsoft has been working on the quantum computing R&D project for the last 20 years.

2. China’s EV giants are betting big on humanoid robots

Chinese Electronic Vehicle (EV) companies are expanding into the humanoid robotics domain as profit margins in the Chinese EV industry continue to decline. The number of EV companies in China fell from 480 to 40 between 2018 and 2023, and profit margins have fallen from 6.1% to 4.6% since 2021. The Chinese EV markets nonetheless remains strong with 54% of cars sold in 2024 being electric or hybrid, compared to only 8% in the US. The move into humanoid robotics manufacturing is explained by three factors. First, the Chinese government is implementing a Robotics+ action plan with the goal of doubling the number of robots in 2025 compared to 2020. Many EV companies are beneficiaries of government grants from this program. Second, the supply chain infrastructure developed for the EV and autonomous vehicle industry is equally beneficial to the robotics industry, e.g., LiDAR cameras (which measure distances to an object by emitting a short laser pulse and measuring the time to receive the reflected signal), battery packs and object recognition software. Third, Chinese companies control 63% of the global supply chain for robotic components, which means that they can produce robots at a lower cost. For instance, the Unitree H1 robot costs 90’000 USD, which is half the cost of Atlas, a comparable model from Boston Dynamics. The result is an emerging dominance of Chinese companies in humanoid robotics. For instance, the GAC Group, a state-owned carmaker, has developed the GoMate robot to work on its EV production line and will mass-produce the robot from 2026. Of the 160 worldwide humanoid-robot manufacturers in operation in June 2024, 60 are Chinese compared to 40 in Europe and 30 in the US.

3. Crunchbase’s AI can predict startup success with 95% accuracy – will it change investing?

Crunchbase, the well-known business information platform that provides data on companies, startups, investors, funding rounds, acquisitions, and industry trends, is undergoing a reorientation to a platform that provides AI-powered forecasts on company growth trajectories, funding round outcomes, company layoffs and acquisitions. Crunchbase hopes to influence how investors act in the context of private markets. The company claims that its AI models achieve 95% to 99% accuracy when testing with historical data. The company’s training data comes from contributed data, anonymized user engagement patterns (Crunchbase currently has 80 million active users) and public data sources. Analysts mention that many financial companies are experimenting with AI-based investment decisions, but that clients remain skeptical of AI generated advice. However, the Crunchbase management believe that the data provider business is ultimately finished because information will eventually flow, even from behind paywalls. They argue of an existential threat from AI systems which can “build better insights by combining it with all the data on the Internet”. Data providers must therefore create a niche as data analyzers and predictors.

4. How to keep AI hallucinations out of your code

This InfoWorld article provides suggestions to address the problem of generative AI hallucinations made by coding agents, that is, code produced that is plausible but incorrect. According to the May 2024 Stack Overflow developer survey, 62% of developers are using coding agents. Manifestations of code hallucination include code that does not compile, algorithmically incorrect code, inefficient code, or code referencing non-existent functions or documentation. The consequences of hallucinated code include security holes, compliance issues and increased technical debt. A Microsoft engineer points out how difficult hallucinations can be to detect, citing the example of a function that was passed a parameter as a value instead of an object – an error that was not detected by the automated testing tools. For the authors, development teams should put processes in place that both minimize the possibility of hallucinations and that detect hallucinations in code. To minimize the possibility of hallucinations, prompts should be detailed and focus on only a small code scope. The developer should check the coding agent’s training date (to ensure that out-of-date APIs are not being referenced), and configure the agent to use retrieval-augmented generation (RAG) for access to reliable data sources and enterprise code bases. To detect hallucinations in code, the authors suggest having the AI-generated code highlighted so that developers can focus attention during testing, using a different AI coding agent to test the code (but still continue to use existing automated testing tools), and adopting a mindset where generated code is considered a suggestion rather than a replacement for human expertise. For one analyst, the role of software developer is mutating to a Q&A and product definition type job.

5. Elon Musk’s startup rolls out new Grok-3 chatbot as AI competition intensifies

Elon Musk has announced the launch of Grok-3, the latest version of the xAI chatbot. The first version of Grok was launched two years ago, with Musk marketing it as anti “woke”. Many of the usual guardrails against vulgarity, use of copyrighted material, reproducing images of well-known people and generating sexually explicit images were removed. Musk is announcing Grok-3 as being “maximally truth-seeking” by integrating a reasoning engine, called DeepSearch, that explains the thought process of the chatbot. TechCrunch reported that xAI briefly censored Grok-3: when asked “Who is the biggest misinformation spreader?”, the thought process indicated that it was instructed not to mention Elon Musk and Donald Trump. This instruction has since been removed, and the chatbot now does mention Trump and Musk in the answer to the question. Both Trump and Musk have recently spread falsehoods (e.g., claiming that Ukraine’s President Volodymyr Zelenskyy started the current war with Russia). Musk mentioned that the “Colossus” supercomputer cluster in Memphis was used to train the chatbot. Finally, the Guardian mentions a study that showed that Grok actually tends towards the left on topics like gender equality and diversity programs, though Musk blamed the training data for this.

6. EU accused of leaving ‘devastating’ copyright loophole in AI Act

An MEP (Member of the European Parliament) has criticized the EU Artificial Intelligence Act for leaving a loophole in protecting artists and content owners from copyright violations. Drafting of the EU’s AI Act began before the surge in generative AI led by OpenAI. The AI Act states that companies must comply with the 2019 Copyright Law, but there are exemptions for text and data mining. The MEP claims that these exemptions are being exploited by Big Tech firms to train their generative AI models. Until now, Tech companies were not obliged to declare where their training data comes from, but new rules about training data declaration are expected to be enacted in August 2025. For the MEP, the situation is compounded by the fact that the EU is abandoning the Liability Act that it had been working on. This Act is smaller in scope than the AI Act, but it contained a provision to compensate people for harm caused by AI products and services. The removal of the Liability Act follows the recent Paris summit on AI, and is believed to be motivated by a desire by Europeans to allay US fears on over-regulation.

7. How AI can predict rugby injuries before they happen

This article reviews a study of the use of AI to predict non-contact injuries to players in rugby. A non-contact injury is one where another player is not involved in the injury. Rugby is a useful context for the study of sports injuries since the game requires a combination of speed, strength and precision by players. It is estimated that non-contact leg injuries account for 50% of player absences in rugby today. Previous studies into rugby injuries looked at individual factors like age, flexibility or previous injuries. The AI-based study collected 1’700 data points from professional rugby players over two seasons. The data included known injury factors like weight, previous injuries, strength and fitness measures, muscle and joint screening test results and training intensity. The model predicted injuries at an 82% accuracy rate in non-contact leg injuries and 75% accuracy for ankle sprains. It identified reduction in hamstring and groin strength, reduced flexibility in ankle joint, greater muscle soreness, and frequent changes in training intensity as the factors that most influence injuries. The authors wish to develop their model by looking at other factors like psychological indicators. The model can be used by team management to predict injuries and to provide personalized training programs to players.

8. Claude: Everything you need to know about Anthropic’s AI

This TechCrunch article is a regularly updated piece about the Claude family of models from Anthropic. These models have become particularly popular for coding and math problems, and image captioning. The main three models of the family are the lightweight Claude 3.5 Haiku, Claude 3.7 Sonnet, and its large model Claude 3 Opus. Claude 3.7 Sonnet integrates a “thinking” mode whereby it explains its reasoning when generating an answer. Despite being the largest model, Claude 3 Opus is the least powerful model though this will change in the future. All Claude models have a 200’000 token context window length (which is the amount of data from a prompt or RAG response that a model can process when generating a response). This number of tokens is equivalent to a 600-page novel (or 150’000 words). Claude models do not currently search the Internet, so they are unable to answer current questions. An API for the models is provided by Anthropic and the models are managed on Amazon Bedrock and Google Cloud’s Vertex AI platforms. These use prompt caching (storing and reusing prompt contexts across API calls) and batching (grouping low-priority inference requests together) to improve performance. An Enterprise version of Claude offers larger context windows (up to 500’000 tokens) and services like Github integration.

Like all popular generative AI models, there is a suspicion that Claude models were trained on copyrighted material from the Internet. Anthropic may claim that it can use such content under the fair-use doctrine of copyright law. Anthropic’s terms of use mentions a clause that purports to defend Claude users in case of litigation: “We will defend our customers from any copyright infringement claim made against them for their authorized use of our services or their outputs, and we will pay for any approved settlements or judgments that result.”.

9. Your boss is watching

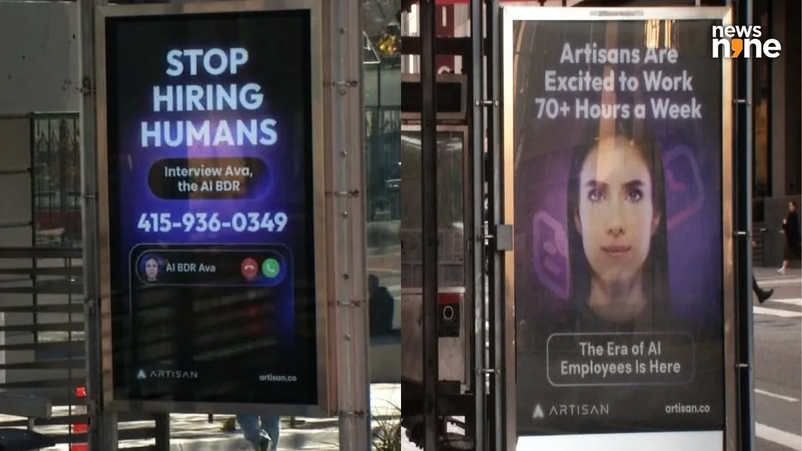

This opinion article by Rebecca Ackermann, a writer, designer, and artist based in San Francisco, examines how AI and advanced algorithms for monitoring worker activities are creating an extreme imbalance in the power dynamics between employers and workers. The author cites a 2022 New York Times investigation which found that 80% of private companies in the US track employee productivity. The surge in productivity monitoring began during the COVID-19 pandemic, and the employee monitoring software market is expected to be worth 4.5 billion USD in 2026. Employee surveillance can come from project management software where employee metrics are compiled in real-time. This has led privacy groups to complain of employees living in fear of a “ruling algorithm” and a “deactivation crisis” where they lose their jobs due to being unable to meet metrics set by an algorithm. Studies show that surveillance software demotivates employees and creates mistrust of management.

Nine News Photo of Billboard.

Nine News Photo of Billboard.

Amazon was cited several times in the article. A US Senate committee examined practices in the company and found that Amazon was using black-box algorithms, based on data collected from monitoring employees, to set quotas for employees. The report found that Amazon workers were twice as likely to get injured as other warehouse workers. It also found that the company fired several hundred workers at a single plant for not meeting quotas over a single year in 2018. The emergence of agents on the heels of generative AI is increasing the pressure on employees. One company, Artisan, is marketing its agents with taglines like “Artisans won’t complain about work-life balance”, and argues that an AI agent costs 96% less than a human doing the same job.